LLMs: The Foundation of AI in 2026*

Words and Concepts Are Not the Same: Why LLMs Aren’t Truly Thinking

Words and concepts are not the same. Any dictionary proves this. The relationship between ideas and words is “many to one.”

This may seem obvious. We use words every day to communicate, solve problems, and run our businesses. But the difference becomes critical when we examine how today’s artificial intelligence actually works.

How Large Language Models Work

Large Language Models (LLMs) power tools like ChatGPT, Claude, Grok, and Gemini. At their core, they are massive neural networks built on the Transformer architecture. This 2017 breakthrough allows the model to weigh relationships between every token in a sequence all at once.

Training happens in three main stages. First, pre-training: the model sees trillions of tokens from books, websites, code, and conversations. Its only task is to predict the next token repeatedly until it learns the statistical patterns of human language. Then comes supervised fine-tuning on high-quality questions and answers so it learns to follow instructions. Finally, reinforcement learning from human feedback helps make the model helpful, coherent, and safe.

The result is a system with billions or trillions of parameters that capture rich statistical associations.

What LLMs Appear to Do Well

Give an LLM the prompt “The capital of France is,” and it outputs “Paris” because that pattern appeared millions of times in its training data. Ask it to solve a physics problem or write a poem, and it produces fluent, often useful text.

Modern LLMs handle very long context windows, maintain consistent personas, and show impressive emergent abilities such as step-by-step reasoning. On the surface, the results can look very capable.

What Really Happens Inside

Consider a complex prompt: “Solve this multi-step physics problem, explain your reasoning, and critique your own solution.”

The prompt is tokenized and turned into numerical vectors. These flow through many Transformer layers. Attention mechanisms calculate how strongly each token relates to the others.

Research shows LLMs develop internal representations of facts, relationships, and even basic world models. However, they have no direct sensory experience. They never touched an apple or felt gravity. The model strengthens or weakens connections based purely on patterns from training data.

It then generates one token at a time by sampling from probability distributions. Even impressive reasoning is built by extending patterns it has seen before — not by manipulating true conceptual understanding the way humans do.

Operating in the Realm of Symbols

LLMs work primarily with symbols — linguistic patterns. They operate on probabilities learned from text, not from real-world experience.

Newer models are becoming multimodal (adding vision and other inputs) and agentic (using tools and real-world actions). These steps add some grounding. Still, at their foundation, they remain statistical pattern matchers.

Like a news reporter reading from a script, they can sound knowledgeable without truly understanding the subject.

The Heart of the Critique

Here is the key point: LLMs reason with words, not ideas.

They excel at remixing language to mimic understanding. They are extremely good statistical autocomplete engines. This explains both their remarkable strengths and their confident hallucinations.

Note: Whether this counts as “truly thinking” is ultimately a philosophical question, not a pure engineering fact.

Until LLMs gain much deeper real-world grounding, they will continue to simulate thought rather than truly think.

A Final Thought

In response to your prompts, LLMs may not be getting better at genuine thinking. They are, however, getting very good at telling you what you want to hear.

*This article was researched and composed with the help of GROK AI!

Category: Uncategorized

AI Is Enhancing Speech to Text*

Turning Spoken Words into Reliable Business Results

You’re on the phone trying to fix a billing issue. You speak clearly: “I need to speak to a representative.” The automated system comes back with, “I’m sorry, I didn’t understand. Did you say ‘account balance’? Please say yes or press one.”

Or you’re driving and tell your phone, “Call Mom,” only to get connected to Tom. Or you dictate a quick note in a noisy office and the text comes out as nonsense.

These voice system frustrations are still fresh in many people’s minds. Yet the technology has made real progress. Today’s speech recognition turns spoken words into clean, usable text that actually supports business operations — from customer service calls to internal note-taking and team collaboration. Here’s how the process works now, how it got here, and what it delivers in practice.

How Modern Speech Recognition Works

The core approach is straightforward: one unified system takes raw sound and produces text without the old mix of separate rules and models. It works probabilistically — it calculates what words are most likely based on patterns learned from huge amounts of real audio, then selects the best match.

The flow breaks down into these practical steps:

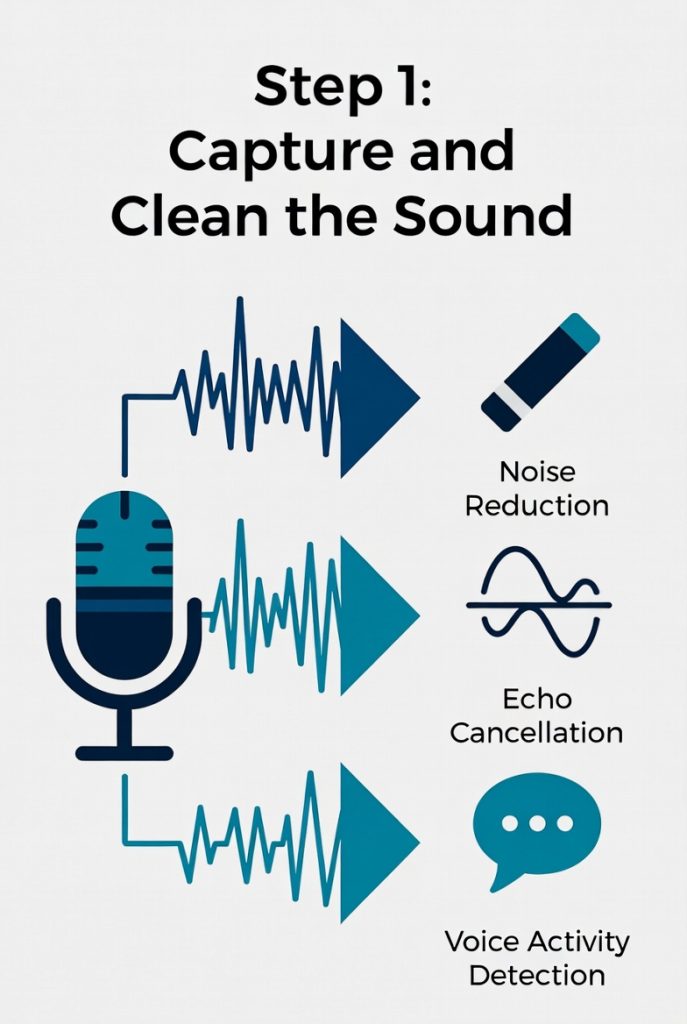

1. Capture and Clean the Sound

The microphone records the audio wave. The system immediately cuts background noise, removes echoes, and figures out exactly when someone is speaking — even in a moving car or busy room.

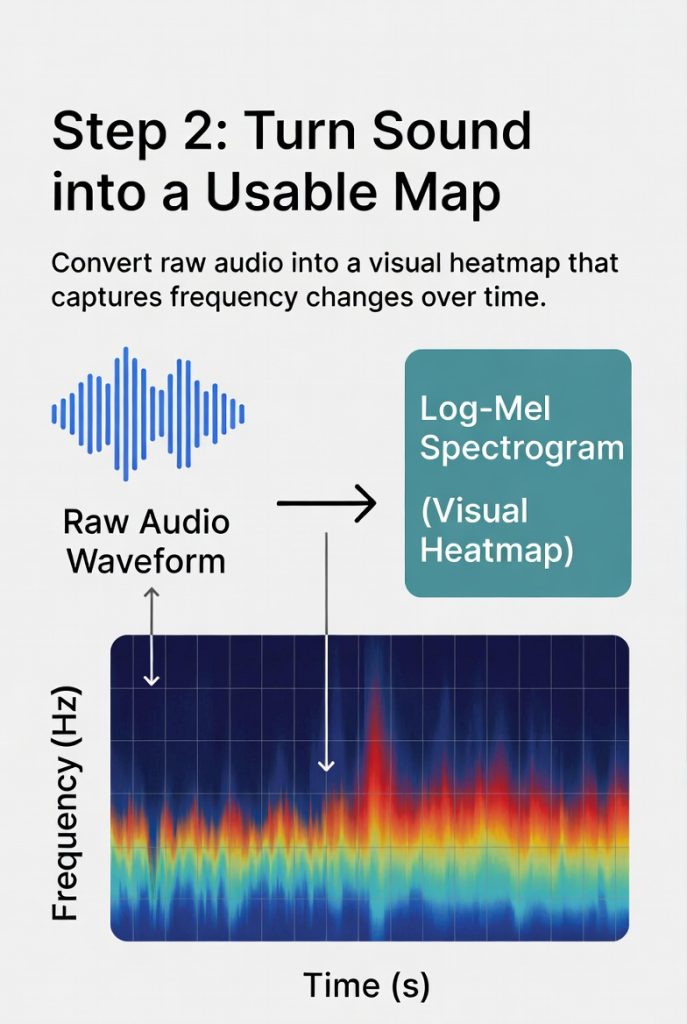

2. Turn Sound into a Usable Map

The audio becomes a visual heatmap of frequencies over time. This format lets the system spot the patterns that match human speech.

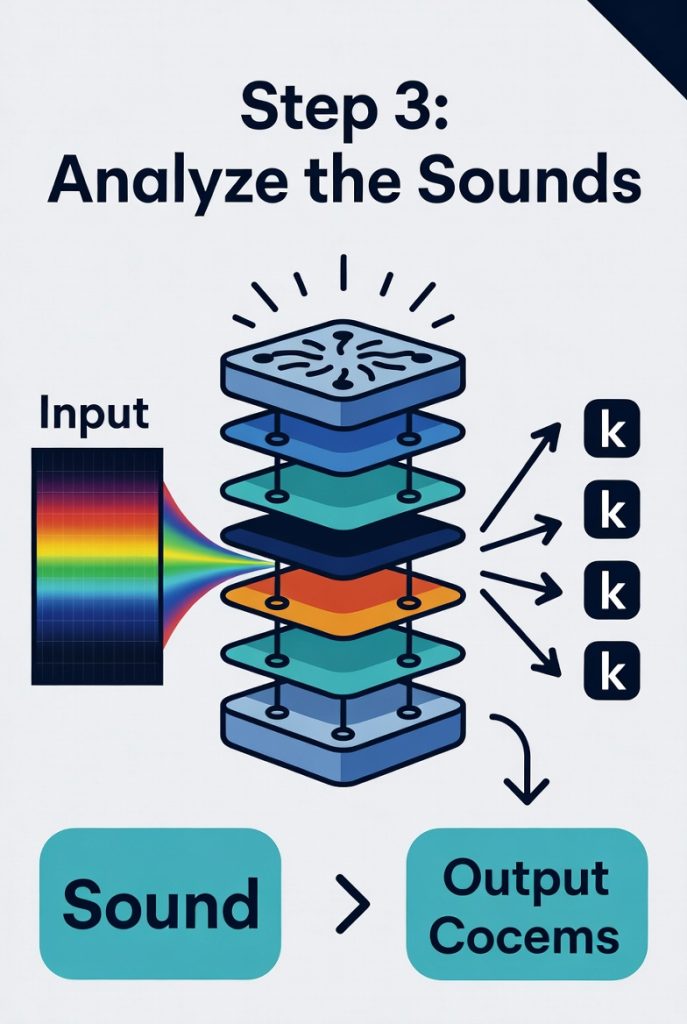

3. Analyze the Sounds

A transformer-style network examines the map. It identifies basic sound units (like the “k” in “cat”) while keeping track of longer patterns and context.

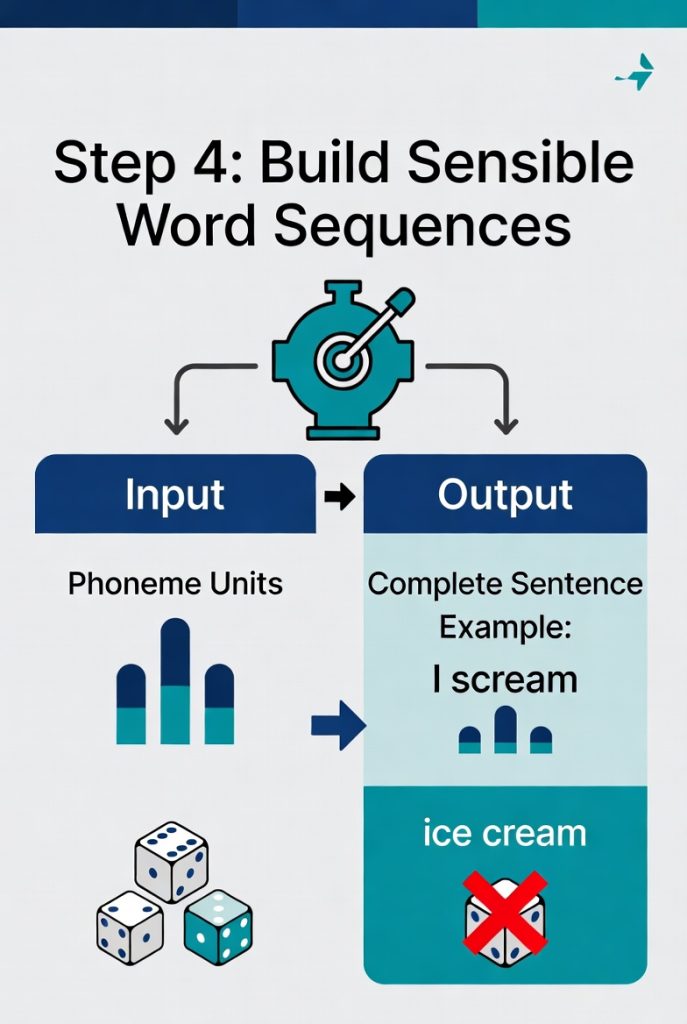

4. Build Sensible Word Sequences

The system predicts full words and sentences that fit grammar and real meaning. It weighs probabilities — for example, “I scream” makes more sense in most sentences than the identical-sounding “ice cream.”

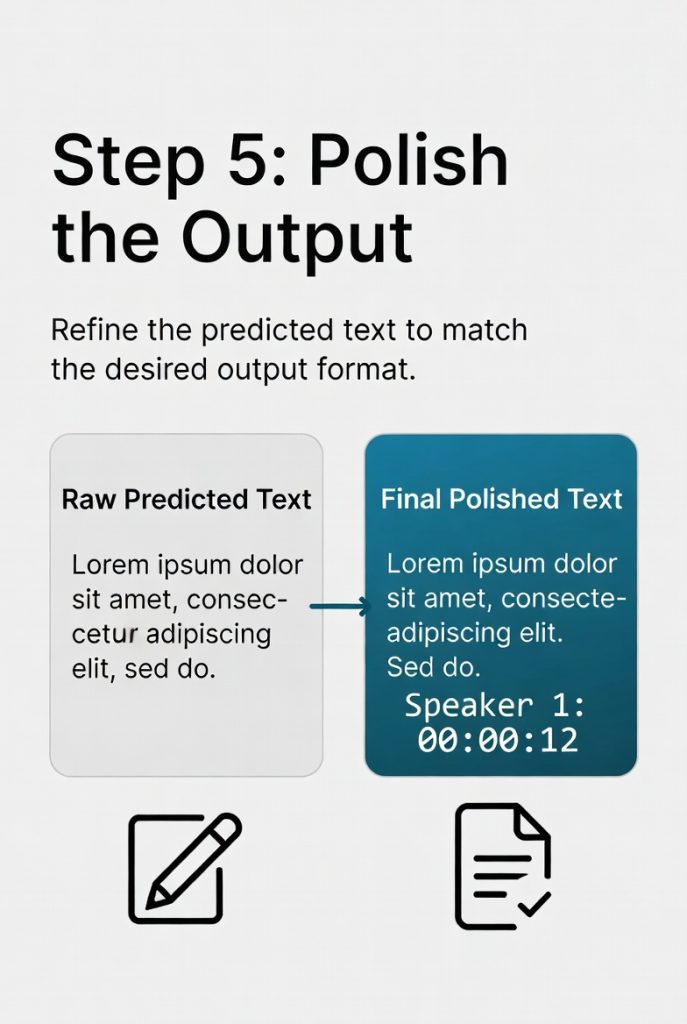

5. Polish the Output

It adds punctuation, capital letters, speaker labels, and time stamps automatically. The result is ready-to-use text.

All of this runs in a single trained model. No more piecing together separate parts like the older methods required.

How the Technology Evolved

Speech recognition has followed a clear path of improvement over seventy years.

Early systems in the 1950s and 1960s were rule-based and very limited. They recognized digits or a handful of words only under perfect conditions and required careful, slow speaking.

From the 1970s into the 2000s, statistical models took over. Projects expanded vocabulary and began handling full sentences. These systems improved accuracy but still needed individual training and struggled with noise or different accents.

The 2010s brought deep learning networks that replaced the old statistical approaches. Voice assistants became widely available, worked for most speakers, and handled real-world conditions much better.

In recent years the biggest gains have come from end-to-end models trained on massive collections of audio. These systems now manage accents, background noise, and conversations across languages with far greater consistency. The latest technology delivers solid, dependable performance where it matters most in business operations.

The End Result: Spoken Words Turned into Actionable Text

The output is clear, punctuated text with time stamps and speaker identification when needed. You can edit it, search it, or feed it straight into other systems for follow-up action.

Leading approaches today achieve strong accuracy in everyday situations — meetings, customer calls, field notes. Doctors capture visit details without stopping their work. Drivers issue commands safely. Global teams transcribe discussions across languages in real time.

The real value shows up in operations: fewer errors, faster follow-through, and less time wasted repeating or correcting information. As the technology continues to improve with current deep-learning methods, speech recognition is becoming standard infrastructure that lets people communicate naturally while the system handles the rest.

It is a clear example of artificial intelligence meeting real work where it happens — through voice — and turning that input into reliable, usable results.

*This article was researched and composed with the help of GROK AI!

Category: AI